Trust as a Competitive Advantage

Trust is often treated as a soft issue in AI. It is not. For many organisations, trust affects adoption, customer retention, regulatory tolerance, partner confidence, and long-term brand strength. Responsible AI practice therefore has strategic value, not only defensive value.

Core Themes in This Chapter

- Transparency and explainability

- Responsible innovation

- Long-term strategic positioning

Explainable AI as a Trust Lever

Explainable AI belongs here because trust is not created by accuracy claims alone. Stakeholders are more likely to trust AI when they can understand what the system is for, what its limits are, when humans remain accountable, and how important outputs can be interpreted or challenged.

For leadership teams, explainability supports trust in four ways:

- it makes high-impact decisions easier to justify

- it improves user confidence and adoption

- it strengthens customer and regulator communication

- it turns responsible AI practice into a visible strategic differentiator

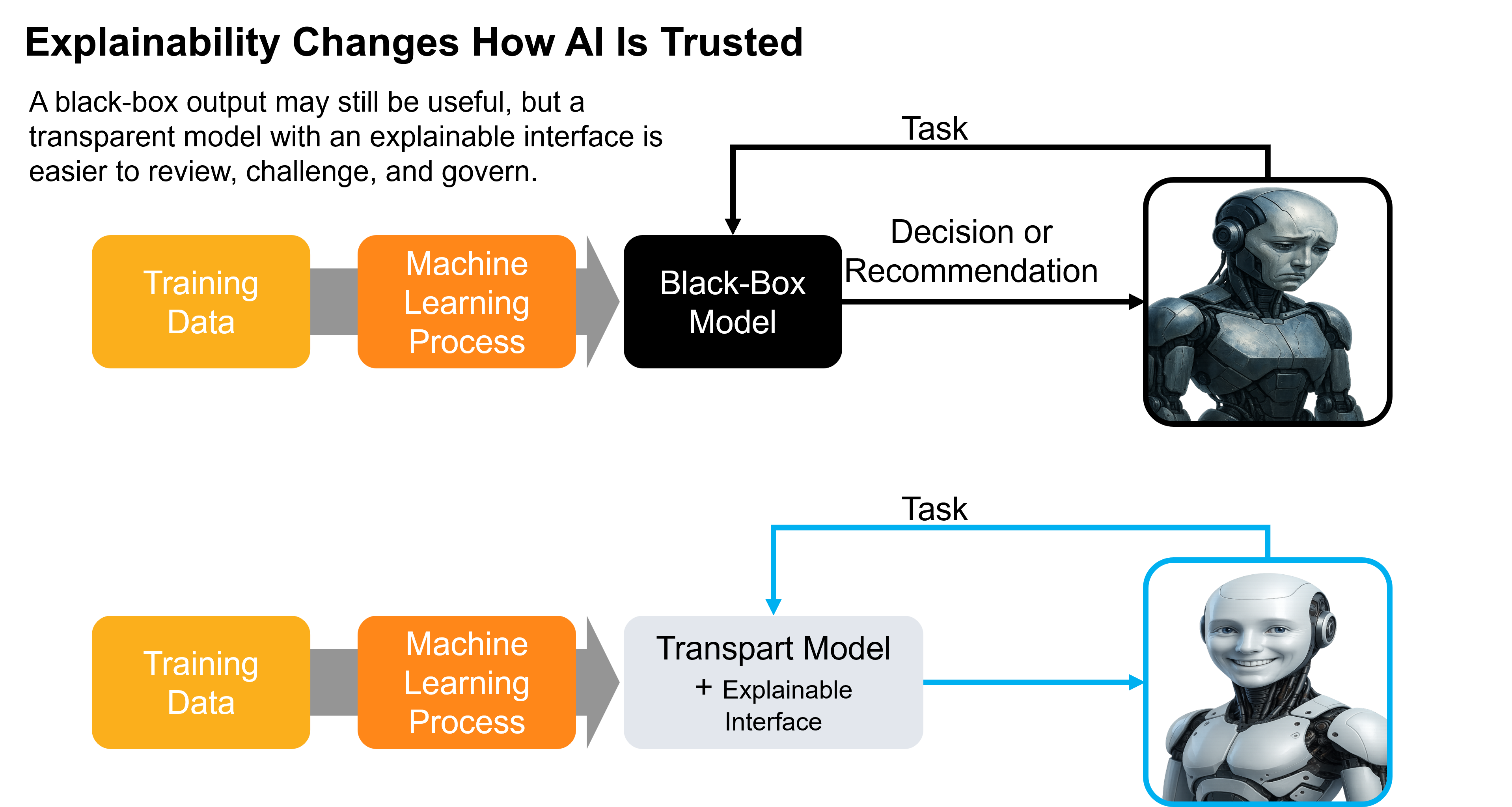

Figure: explainability does not guarantee correctness, but it improves the ability of users, managers, auditors, and regulators to understand, challenge, and govern important outputs.

Types of Explainability

Leaders do not need every technical detail, but they do need a simple way to distinguish the main forms of explainability used in practice.

- Intrinsic explainability refers to models that are more interpretable by design. Their logic is easier to inspect directly, which can support confidence and governance from the start.

- Post-hoc explainability refers to explanation methods applied after training. These help people understand outputs from more complex systems, even when the model itself is not naturally transparent.

- Model-agnostic methods can be applied across different model types. They are useful when organisations need a more flexible explanation approach across several systems.

- Model-specific methods are tied to a particular model family or architecture. They can be more tailored, but they are not always portable across the AI estate.

These categories matter because they shape what leaders can realistically expect from an explanation. Some methods help explain a model’s overall behavior. Others help justify a single output. Some are easier to standardise across the organisation, while others are stronger only in narrow technical settings.

Figure: explainability methods differ by when explanation is produced and how broadly the method applies across model types.

Global and Local Explanations

Leaders should also distinguish between two different kinds of explanation:

- Global explanation shows what generally matters across many decisions or outputs. It helps management understand the model’s overall logic, major drivers, recurring patterns, and structural weaknesses.

- Local explanation shows why one specific prediction, score, recommendation, or action occurred. It is especially important when an individual outcome needs to be justified, challenged, audited, or escalated.

Both are useful, but they answer different leadership questions. Global explanation supports governance, model review, and strategic confidence. Local explanation supports case-level accountability and operational trust.

Figure: global explanation helps leaders understand what matters overall; local explanation helps them understand why one specific decision was made.

Who Needs Explainability and Why

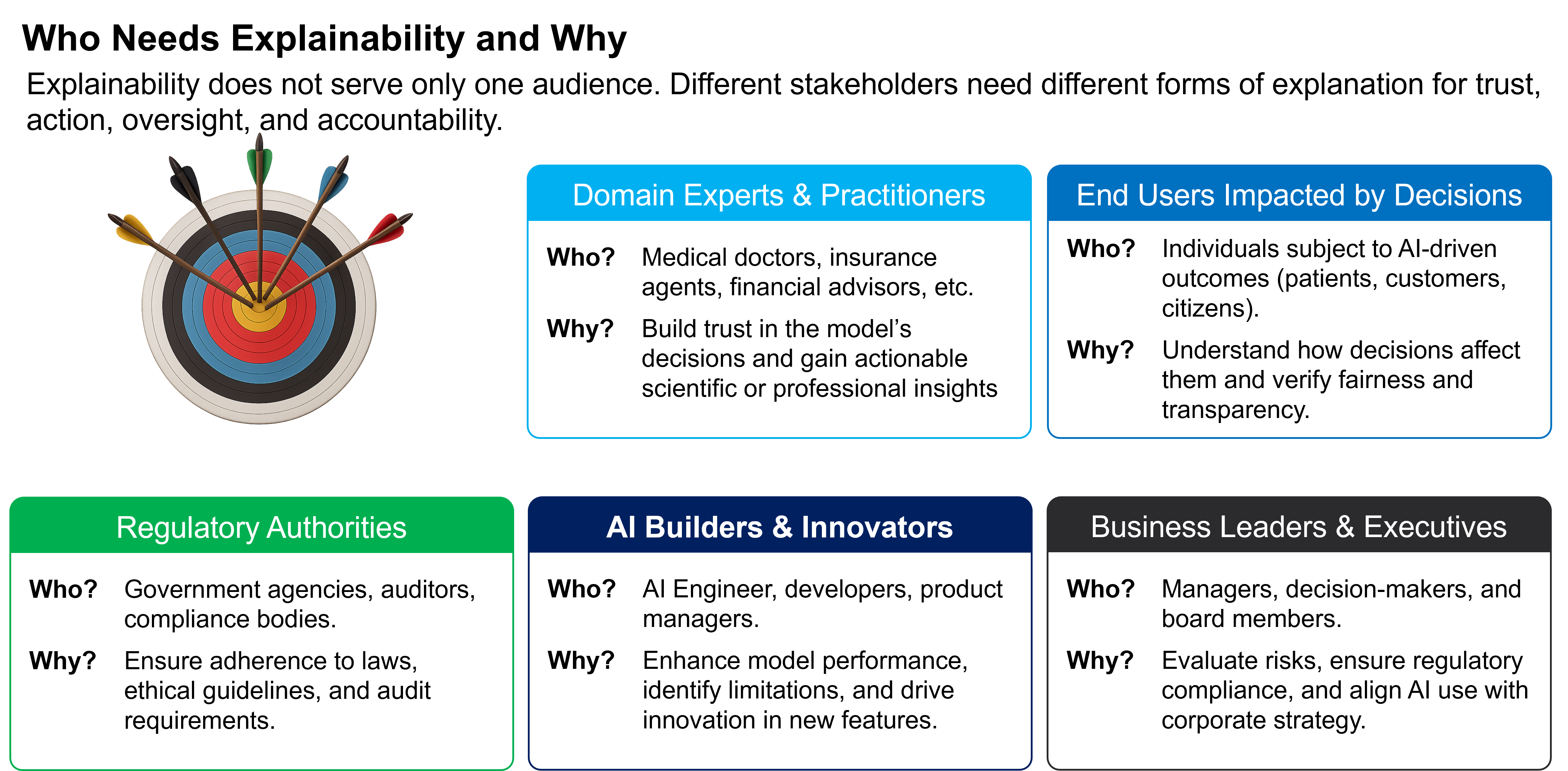

Explainability is not a single audience requirement. Different stakeholders need different levels and forms of explanation depending on what decisions they make, what risks they carry, and how closely they are affected by AI outputs.

Domain experts need explanations they can use in practice. End users need enough clarity to understand how decisions affect them. Regulators and auditors need evidence that decisions can be reviewed and defended. Builders need explanations that help them improve the system. Executives need explanations that support governance, accountability, and strategic judgment.

Figure: explainability serves different purposes for different stakeholders, from operational trust and debugging to regulatory review and executive oversight.

Leadership Lens

The organisations that sustain AI advantage are unlikely to be those that move fastest without control. They are more likely to be those that combine speed with credibility, clarity, and dependable governance.

Key Questions for Leaders

- What does trust mean in our sector and stakeholder context?

- Which transparency practices create real confidence rather than generic messaging?

- How can responsible AI become part of our market position?